When “Help” Becomes a Weapon

Facebook "Suicide Prevention" Tool Sends Ominous Message

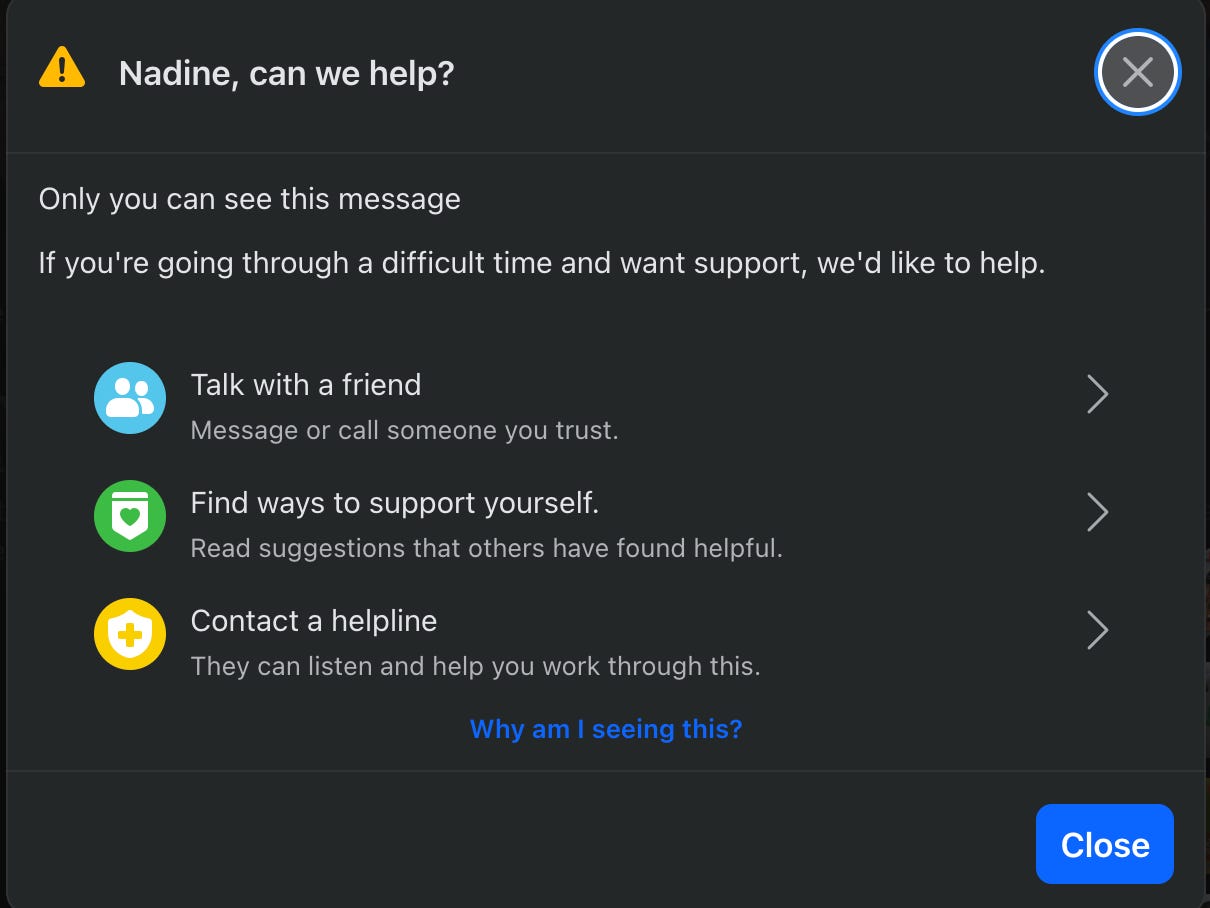

For the past week, I’ve been getting urgent pop-ups from Facebook asking if I need support.

They open with a warning icon and my name.

“Nadine, can we help?”

The message assures me that only I can see it. It offers to connect me to a friend, suggests coping tools, and links to a crisis line. The tone is careful and protective.

The first time, I ignored it. I assumed Meta was being hypervigilant given the scrutiny over the mental health impact of its platforms. I figured the system was casting a wide net.

By the third alert, it was clear this wasn’t random.

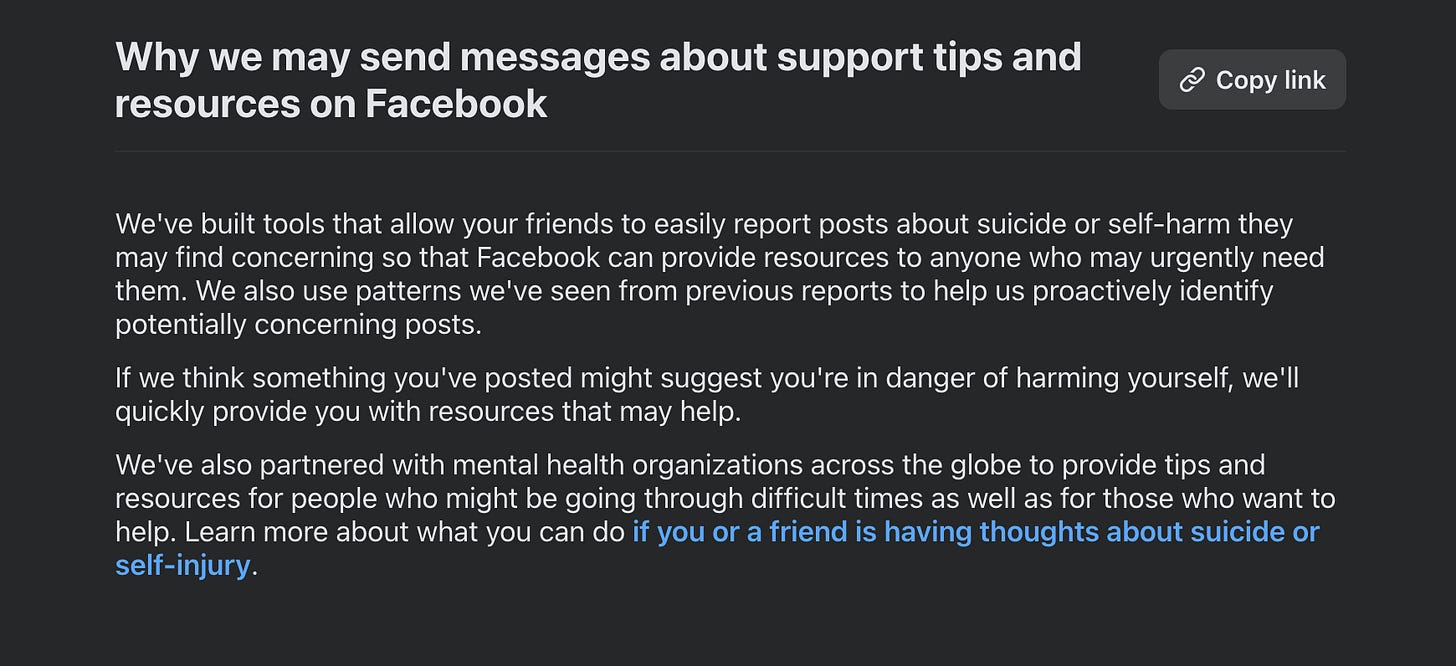

When I clicked “Why am I seeing this?” the explanation was simple. Facebook allows users to report posts they believe indicate suicide or self-harm. The company also relies on automated pattern detection. If the system concludes you might be at risk, it sends resources.

Which brings me to something I never expected to have to say publicly, but these times and the uneasy feeling I have about receiving these prompts compel me to say:

I am not in crisis. I love my life. My mental health is strong. I have a deep and steady community around me. I am not expressing self-harm.

There is nothing in my writing that would reasonably trigger concern. Which leaves one conclusion: someone or some organization is repeatedly reporting me as a danger to myself.

That realization changes the feeling entirely.

A Familiar Playbook

We have seen wellness checks misused before. We have seen systems built to protect people turned into instruments of intimidation, whether through swatting, false abuse reports, or coordinated mass reporting campaigns meant to trigger takedowns, all framed in the language of safety. This feels adjacent to that pattern.

Platforms create reporting tools so users can flag genuine danger. Those tools depend on good faith. Trolls or bots exploit them. The target receives official-looking alerts that imply instability or vulnerability. The aggressor remains anonymous. The platform appears responsible.

If you are visible and outspoken, especially if you challenge powerful institutions, the message is not neutral. It can subtly position you as unstable. It can raise doubts about how others might interpret your words. And it makes clear that someone is watching closely enough to activate the system against you.

This is not just a pop-up. It is a reminder that critics can reach into the platform’s infrastructure and generate alerts about your mental state.

For someone with a public profile, that has reputational implications and functions as psychological pressure delivered under the banner of care.

Scalable Harassment

Here is my larger concern: How scalable is this?

If coordinated actors decide to repeatedly flag journalists, organizers, elected officials, or critics as suicidal, what follows? Does the algorithm escalate? Are accounts quietly restricted? Do internal “risk” signals attach to a profile in ways the user cannot see? Does it affect visibility, moderation decisions, or ad systems?

We are in a period where tools built for protection can be redirected toward harassment. Reporting systems, trust and safety mechanisms, and automated detection all operate inside black boxes and can be gamed.

The message tells me only I can see it. That is meant to reassure. It also means the harassment is private. No one else witnesses it. There is no public record of the false reporting. It is contained and deniable.

That is precisely why it is so disturbing.

Why I’m Saying This Out Loud

I am naming this for two reasons.

First, as a precaution, to state clearly that I am well.

Second, to ask whether others are seeing the same pattern.

Have you received repeated crisis pop-ups without cause?

Have you noticed coordinated reporting?

Has anyone at Meta explained what guardrails exist to prevent malicious use of self-harm reporting tools?

If this is an emerging tactic, it deserves attention before it becomes normalized.

Not Neutral; Not Helpful

Technology companies tend to present safety tools as objective systems that simply enforce rules. In reality, those tools exist inside highly polarized environments where organized actors test their limits, exploit their gaps, and use them strategically in political fights and harassment campaigns.

Any anonymous reporting system can be abused. The real question is whether platforms are willing to acknowledge that reality and design meaningful safeguards.

Crisis tools are essential and they do save lives, but when someone can trigger them in bad faith, the experience stops feeling like support and starts to feel like a warning. Applied quietly and repeatedly, it is a form of harassment.

If you are experiencing this, let me know.

Cancel meta

I have been playing with the platform for over a year ir so and have recognized quite a few concerning flaws in the system. Most recently, I was flagged for commenting in a community group and was asked if I lived in the area. Why would that be asked when I have shared posts and commented in it for years? Is it because it was "political" even in reference to the political post shared? I'd say so. I am not the only person it has happened to either.

On top of that, I have noticed words, messaging, and posts shared or commented on affects the accounts I see. It took a week for me to start seeing propaganda in my algorith and about a week to get back to my normal view. I have, also, witnessed this with Google over the passed 4 or 5 years. It was pretty amusing at first... Then, I realized that it was trying to guess what I liked but I was too random for it.

With that said, how the administration talks about derrangement- they and their supporters surely don't recognize the irony in their word choice- plus the hand joining with social media executives, I would not put it passed them if they were flagging certain words and messages. All they need is a pattern and they can apply it.

I am curious if more people are experiencing this.